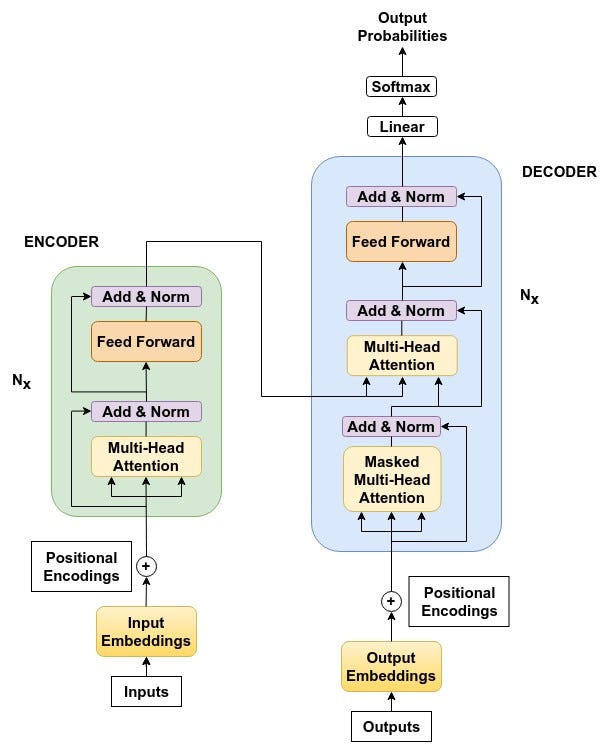

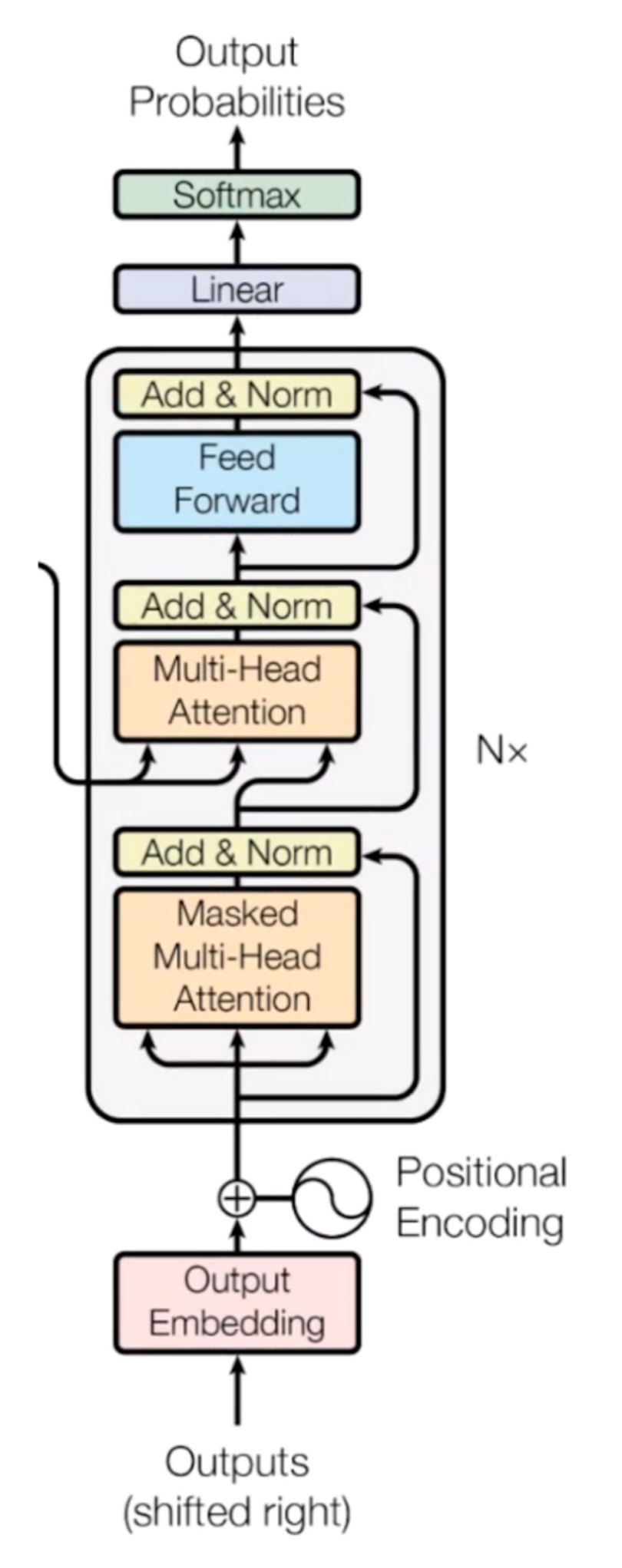

The Transformer Model

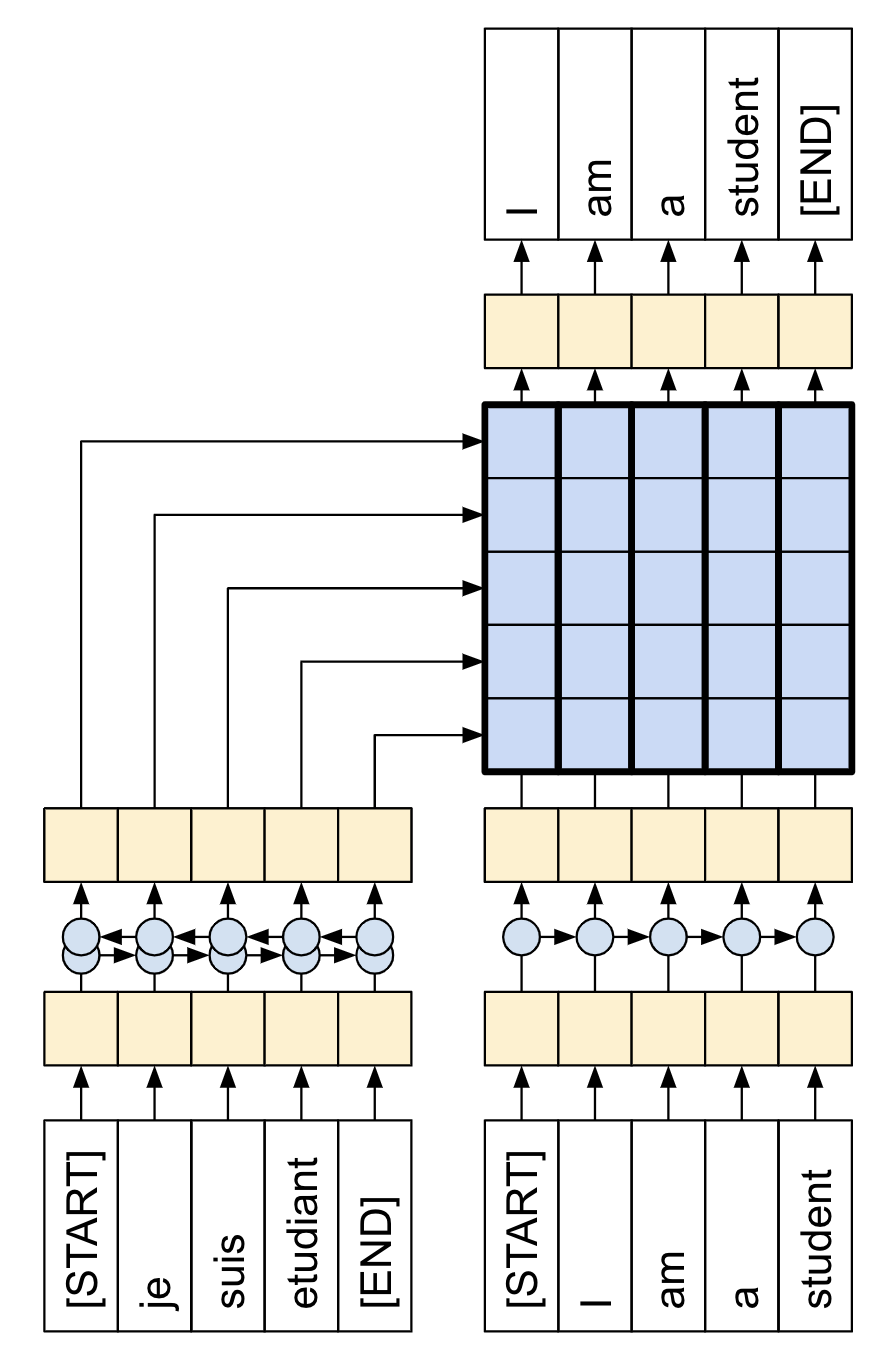

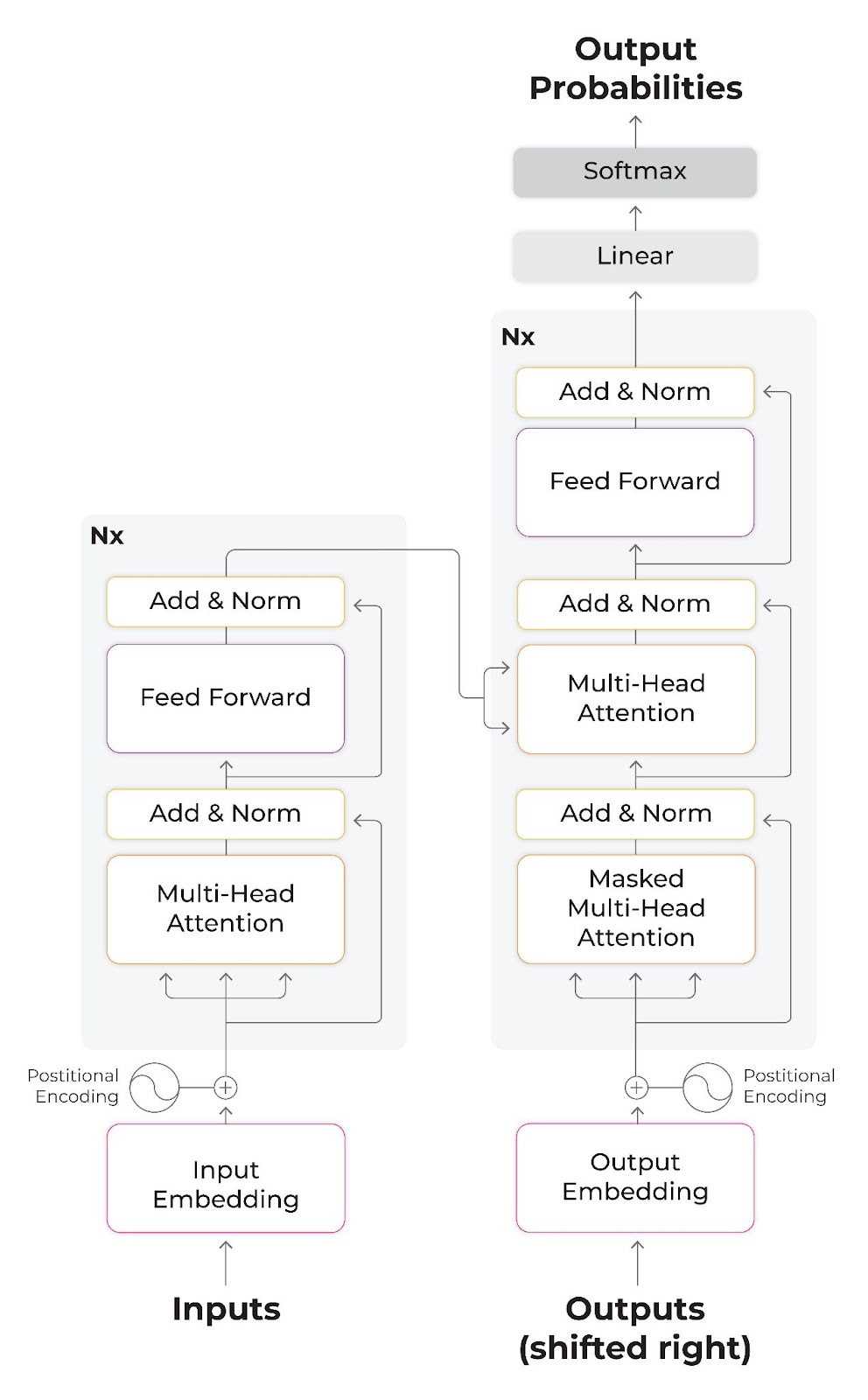

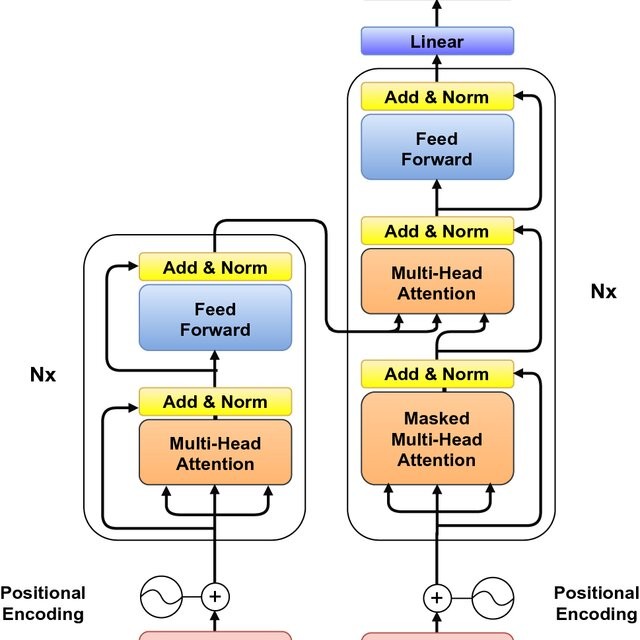

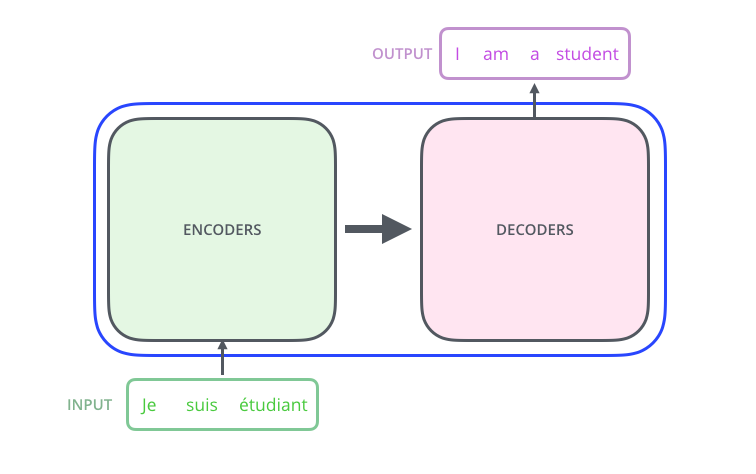

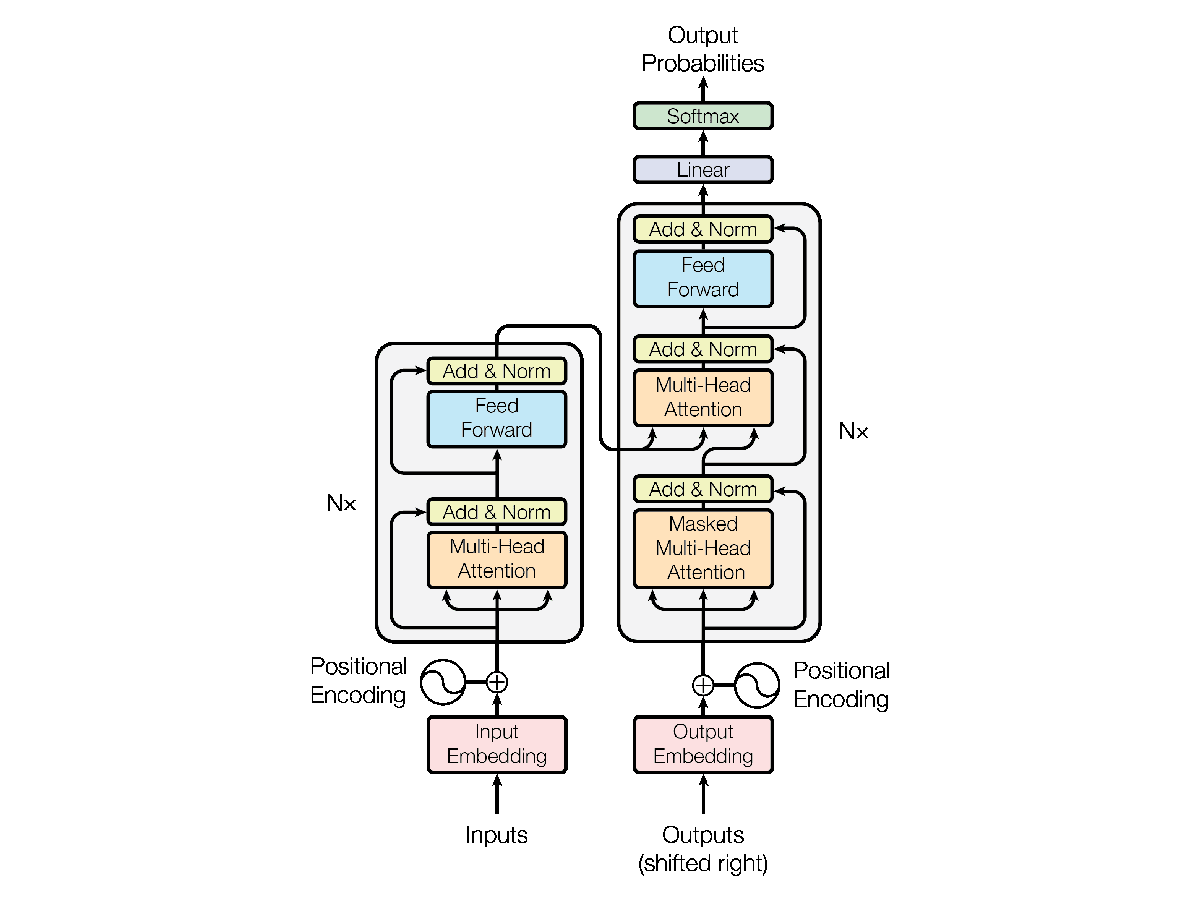

We have already familiarized ourselves with the concept of self-attention as implemented by the Transformer attention mechanism for neural machine translation. We will now be shifting our focus to the details of the Transformer architecture itself to discover how self-attention can be implemented without relying on the use of recurrence and convolutions. In this tutorial, […]

How Transformers and Large Language Models (LLMs) Work — A Comprehensive Guide Using BERT, GPT, and T5, by Francesco Strafforello

Neural machine translation with a Transformer and Keras, Text

Transformer Neural Networks: A Step-by-Step Breakdown

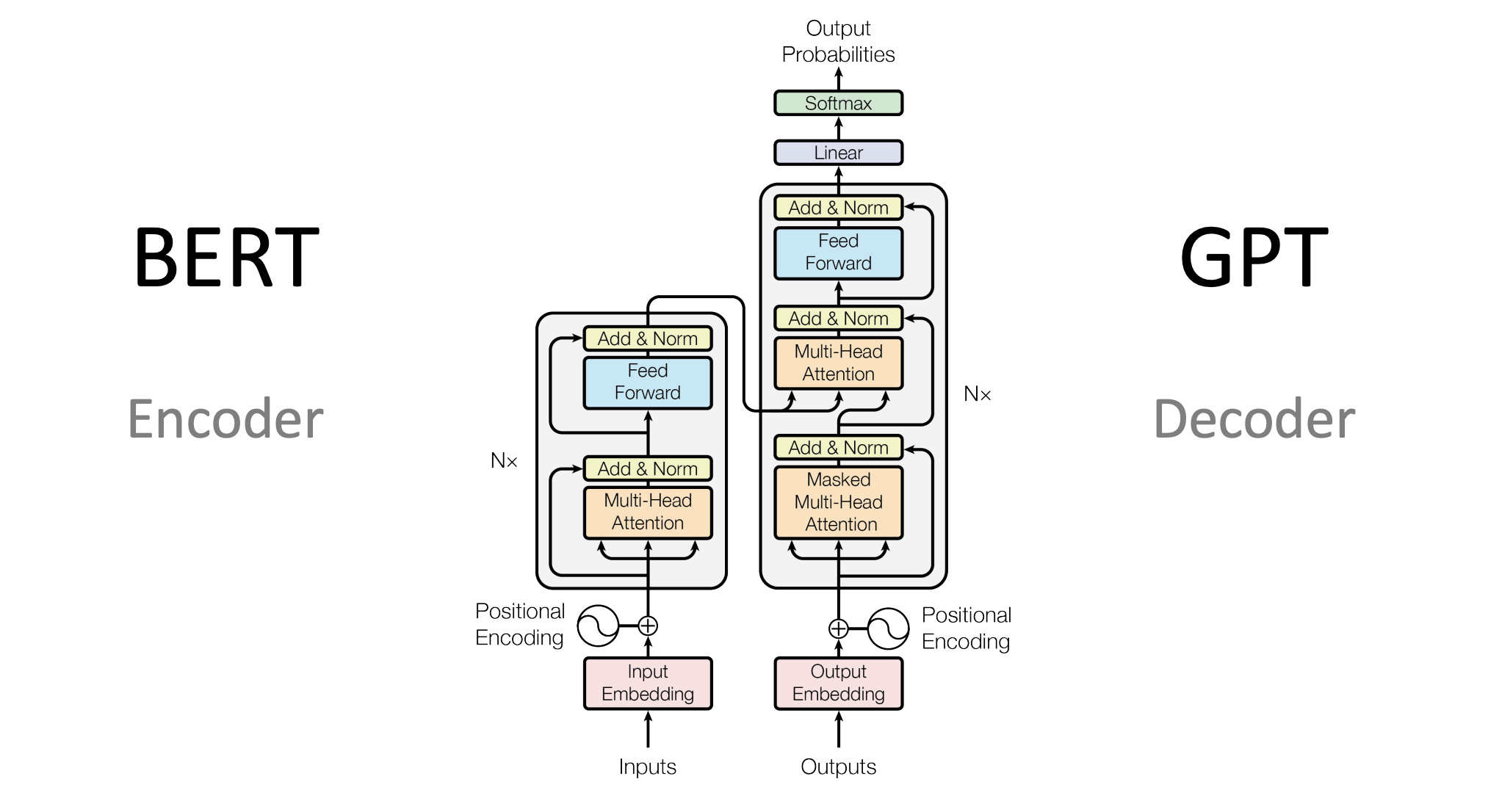

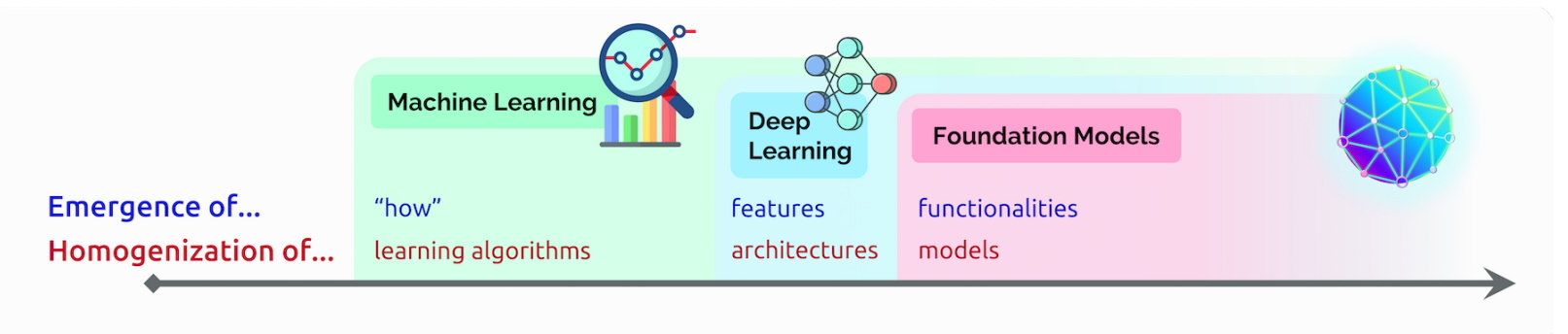

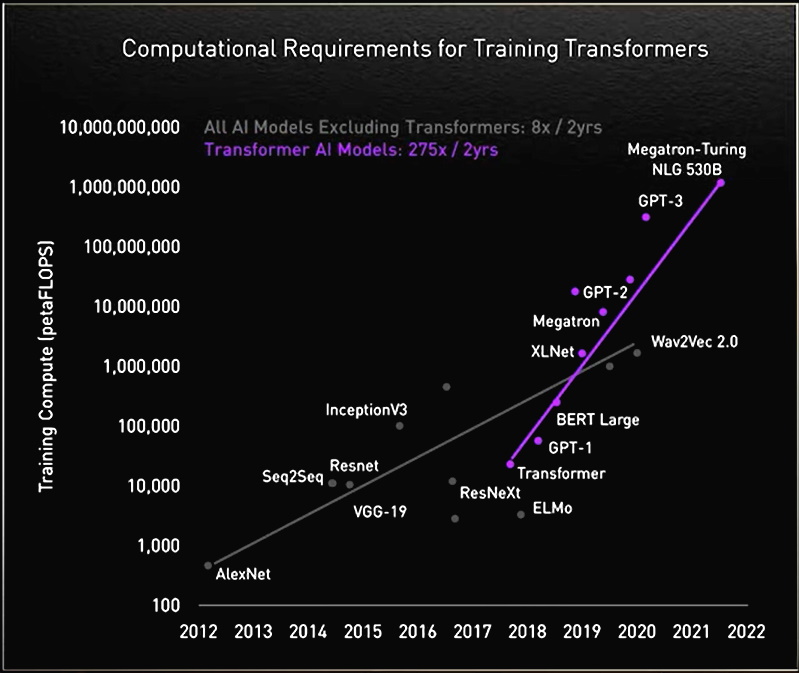

Foundation Models, Transformers, BERT and GPT

From Transformer to LLM: Architecture, Training and Usage

Generative pre-trained transformer - Wikipedia

Unleashing the Power of BERT: How the Transformer Model Revolutionized NLP

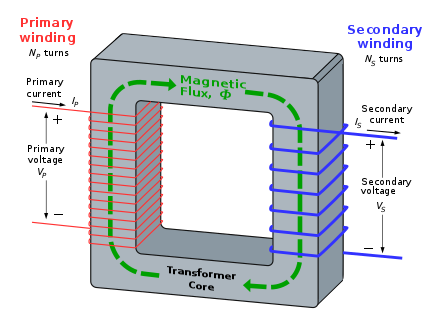

What Is a Transformer Model?

What Is a Transformer Model?

Revolutionizing Language AI: Unleashing the Power of Transformer-Based Models for Unprecedented NLP Breakthroughs

The Illustrated Transformer – Jay Alammar – Visualizing machine learning one concept at a time.

The Transformer Model: Revolutionizing Natural Language Processing

How Transformers Work. Transformers are a type of neural…, by Giuliano Giacaglia

/cdn.vox-cdn.com/uploads/chorus_asset/file/24761347/fortnite_battle_bus_transformer.jpg)